The oil price war, capital market uncertainties, and the novel coronavirus leave no part of enterprise operations unaffected. These unprecedented times compel us to examine our operational efficiency and resilience, including how we operationalize data to address the needs of our diverse data consumers.

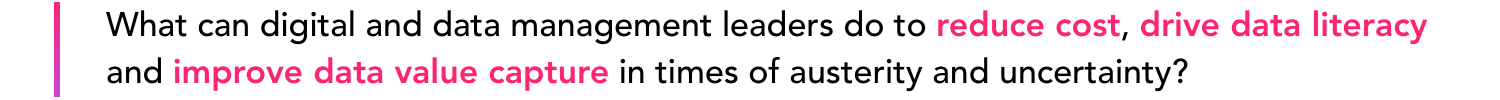

What’s wrong with the way we operationalize data today?

It is no secret that data management and analytics workflows have always been complex, siloed, and costly to enterprises all over the world.

A typical data pipeline for analytics with associated workflow challenges. Source: Gartner

Adding insult to injury,

- the rise of AI/ML with difficult to find data scientist is imposing its own set of - often very differentiated from conventional BI user - requirements on data modelling, data source availability, data integrity, and out-of-the-box contextual metadata on data;

- data engineers working on industrial digitalization projects struggle with access to key source system data that is reminiscent of year 2010 in non-industrial verticals;

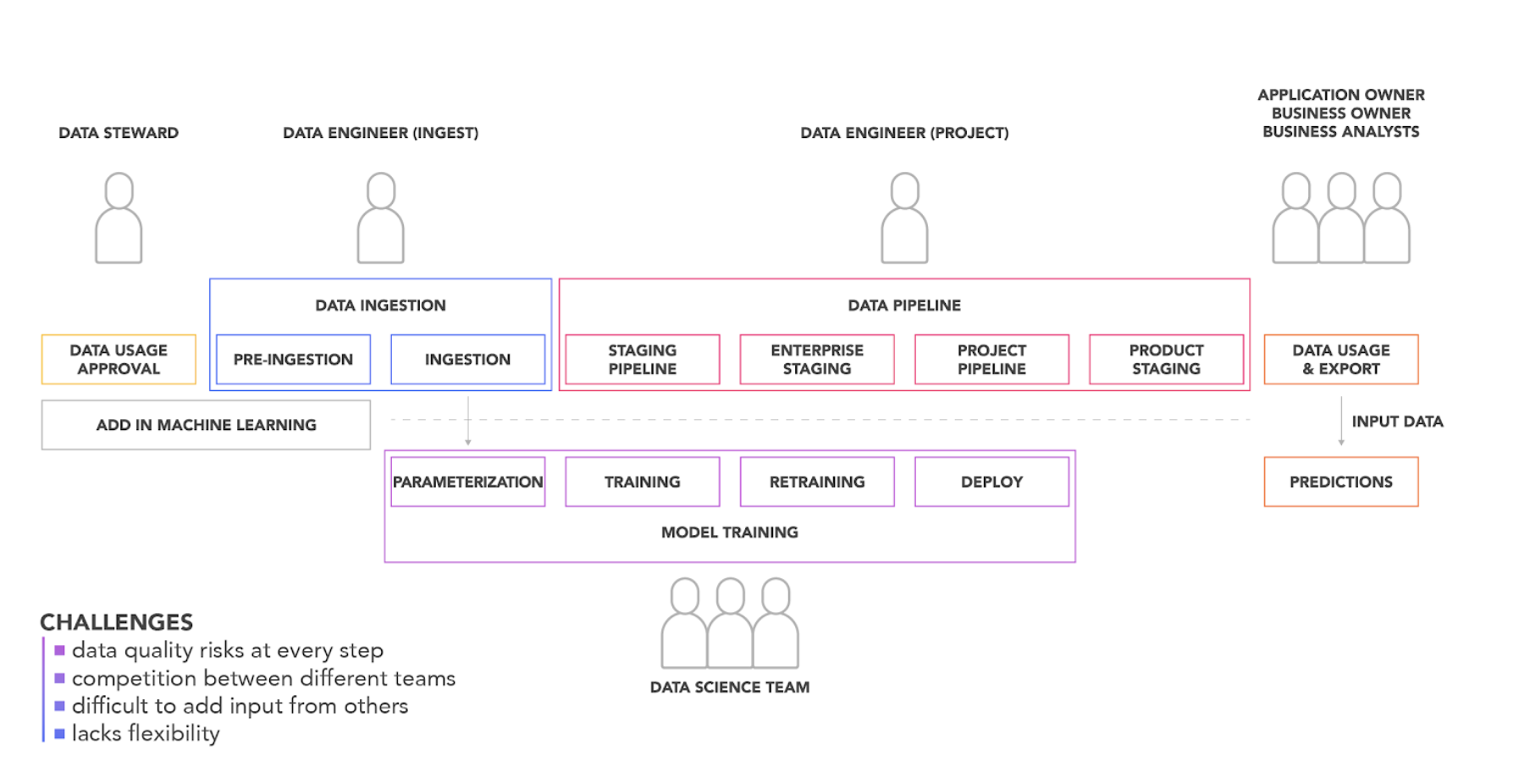

- industrial companies are not only facing the same challenges as, for example, their retail peers, but are presented with a superset of challenges resulting from the IT/OT convergence and associated nonconventional-IT-only data velocity, variety, and volume; and

- rushing to show digital execution, many have embraced the AI hype that has led to quickly demonstrable digital proof-of-concepts, yet is failing to yield truly operationalized - and even less scaled - concrete business OPEX value.

The IT/OT divide is converging over time.

Your industry and your data culture are changing — you need DataOps

Similar to DevOps, DataOps has a compelling and topical value proposition. With DataOps, you reduce specialized roles in your data-to-value workflows and enable higher data consumer autonomy and empowerment, thus creating higher resilience and a more lean and cost-efficient core for digital transformation.

-png.png?width=717&name=Ecosystems%20(5)-png.png)

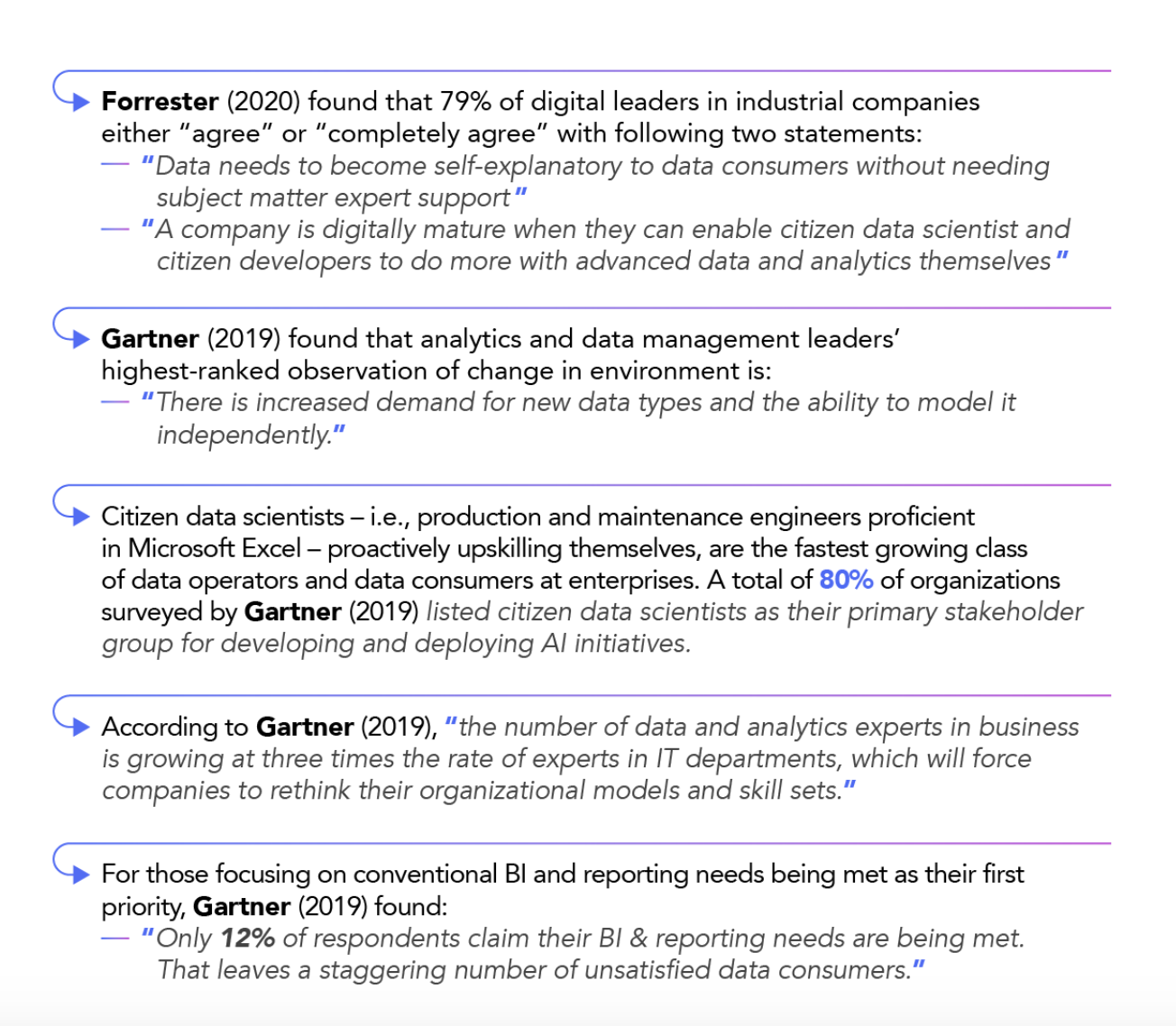

Not convinced DataOps is applicable to your organization and industry? Let’s look at the recent market research data.

Similar to DevOps for professionally developed software, DataOps and low-code form the technological foundation for citizen data science- and citizen-developed applications.

DataOps is key to increasing data literacy. Data literacy is key to securing value from data and digital.

Enabling all data consumers to have instant access to all data with context -- what we at Cognite call contextualized data -- is not easy. We know.

But imagine what your data consumers can do when they are all empowered to speak data, to independently and collectively access all relevant, contextualized data, and to safely develop the next generation of productivity-enhancing digital applications -- unleashing the transformative potential of Excel 2.0 for your business.